There’s a quiet shift happening in financial advisory.

Not the kind that makes headlines. The kind you notice slowly. One tool here, one faster report there, one client expecting quicker answers than before. And at some point, you realise the way advisors work doesn’t feel the same anymore.

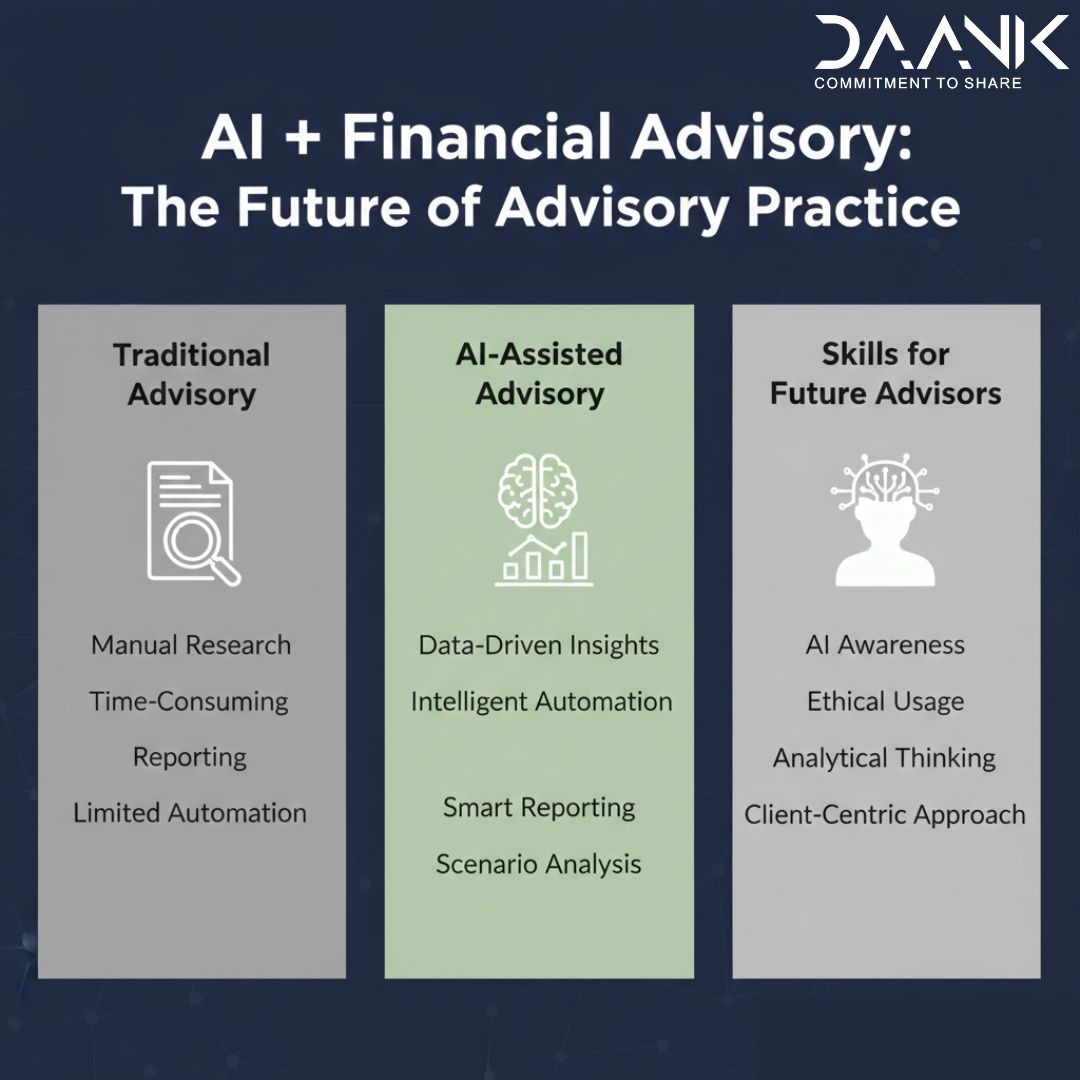

Technology used to sit in the background. Now it’s starting to shape the job itself. AI is a big part of that shift.

Most advisors don’t wake up thinking about AI. They think about clients, portfolios, markets, and deadlines. But AI is already slipping into all of that. Research is getting faster. Reports are cleaner. Communication is sharper. And once you get used to that level of efficiency, it’s hard to go back to slower ways of working.

That’s exactly why more structured learning around AI in finance is starting to matter, even for people who never saw themselves as “tech-oriented.”

So the real question is not whether AI matters. It’s whether you actually understand what it’s doing.

When you strip away the hype, AI is most useful in the parts of the job that usually take up time and energy. It can go through large datasets in seconds, pull out patterns, summarise long reports, and even help draft client notes you were going to write anyway. It also makes it easier to test different risk scenarios without spending hours building them manually.

None of this replaces the advisor. It just removes friction. And if we’re honest, a lot of advisory work has friction.

But this is also where things get slightly uncomfortable.

Just because something is faster doesn’t mean it’s right. AI can give you an answer that looks clean and confident. It’s structured, well-worded, and sounds convincing. And that’s exactly why it can be misleading.

In finance, “this looks right” is not a standard you can rely on.

This is where a lot of advisors feel stuck. They know AI is useful, but they’re not fully sure how much to trust it or how deeply to use it. Without some guidance or framework, it’s easy to either overuse it or avoid it completely.

Understanding AI is not just about using it. It’s about knowing when not to trust it blindly. That shift is subtle, but it matters more than people think.

Earlier, being a strong advisor was largely about analysis. Today, analysis is easier to access. What’s becoming more valuable is judgment.

You still need to pause and question what you’re seeing. You need to check if the logic actually holds up, spot when something feels slightly off, and most importantly, explain your thinking in a way that clients can trust. AI can generate outputs, but it cannot take responsibility for them. That part still sits with you.

Clients, interestingly, may never ask you about AI. They won’t ask what tools you’re using or how your reports are generated. But they will notice the difference in how you work. They’ll notice how quickly you respond, how clear your insights are, and how well you explain things.

And they will compare, even if they don’t say it out loud.

That’s how expectations change. Not through big announcements, but through small, consistent experiences.

Where many people go wrong is in how they approach AI. Some ignore it completely, assuming it’s not relevant yet. Others jump in too quickly and start trusting everything it produces. Both approaches miss the point.

AI is not a shortcut. It’s a tool that needs context and judgment.

A simple way to think about it is this. It can make you faster, but it doesn’t automatically make you better. If anything, it raises the standard of what “better” looks like.

This is also why we’re seeing a rise in platforms that focus specifically on practical, finance-focused AI learning rather than generic tech knowledge. Because the gap is not awareness anymore. It’s application.